WTF Google (aka another botched launch for nano banana gemini flash preview)

Google probably has the best tech in the world. They’re one of the few companies right now who could really win the AI race. They have the compute power (GCP, TPUs). They have the models (Gemini). They have the people (Deep mind? I think). They have the consumer market (Google). They have the business market (Google workspace / professional gmail and Google Docs). And they just figured out a major leap forwards in AI image generation with faithful edits.

GOOGLE SHOULD OWN THE WORLD.

But they keep botching their launches, they have no idea how to build a product or a platform, and they can’t communicate to any of the various audiences.

They are getting absolutely crushed by OpenAI and Anthropic. These companies didn’t exist until like yesterday, but ChatGPT is what consumers think AI is, and Claude Code and Sonnet and Opus is what developers and business customers think AI is. Weird mistakes on top of Google search results is what people think Google AI is.

Google forgot how to launch products like Boeing forgot how to build planes. They used to do this fine - see Gmail, Maps, even GCP to some extent. But now they botch every single thing (e.g. various chat and social media platforms, and everything AI-related) that they attempt. The knowledge clearly doesn’t exist in the instution any more.

They got a talking to in this famous rant but they have not taken to heart.

Exhibit 1: Gemini Flash Nano Banana

Image generation with Gemini (aka Nano Banana) aka Gemini 2.5 Flash Image aka Bring your vision to life with advanced editing is probably the best thing that AI has released in days. It’s much faster than any previous model I’ve tried and it’s the first model I’ve tried that can accurately do edits while keeping faithful to a reference image.

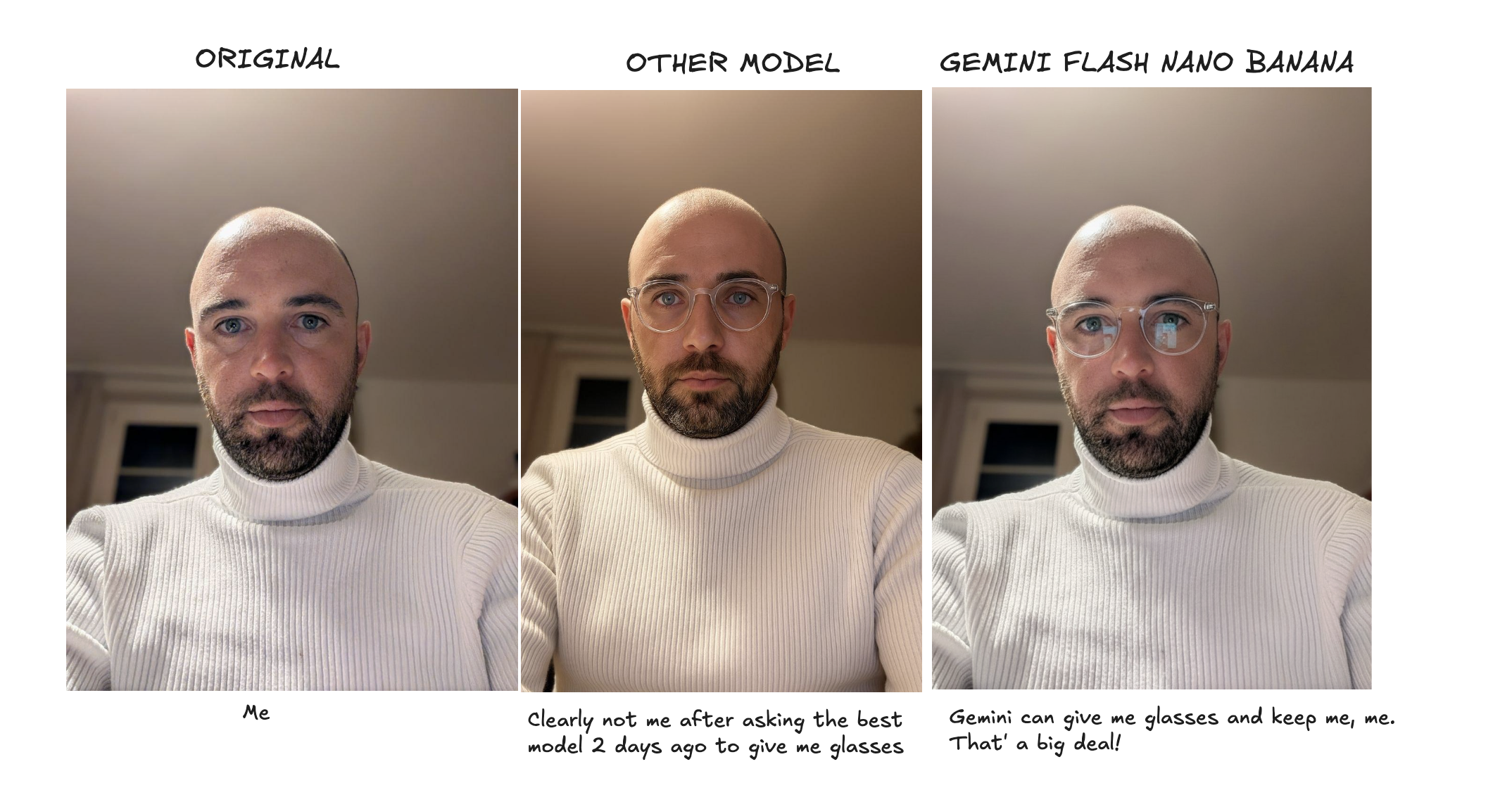

Here are three images - from left to right

- me

- an attempt from a few months ago with a model whose name I can’t even remember to try on some glasses, but you can see it’s regenerating the entire image and can’t keep me looking like me

- The same attempt from today, with Gemini Flash Nano Banana, which does it fine

This is amazing progress! It’s also much faster, much cheaper, and it has an API, SDKs, a mobile app, a web app. I can use it anyway I want. Gemini Flash Nano Banana has every single ingredient needed to create another viral wave similar to ‘Studio Ghibli’ when OpenAI released v2 image generation, or Gmail back in 2004.

But it’s so so confusing and broken that it won’t.

A very confusing launch

Google launched the new model on their blog and their other blog and their AI site, and on AI studio and in Vertex, and no one (even the people at Google) know which one is which or why they need so many sub-brands and products and whatever the fuck all of this is.

Developer blog: https://developers.googleblog.com/en/introducing-gemini-2-5-flash-image/

Ai.google.dev https://ai.google.dev/gemini-api/docs/image-generation#image_generation_text-to-image

https://ai.google.dev/gemini-api/docs/image-generation

Ai.studio https://aistudio.google.com/prompts/new_chat?model=gemini-2.5-flash-image-preview

Vertex https://console.cloud.google.com/vertex-ai/studio/multimodal?model=gemini-2.5-flash-image-preview

That’s fine though, developers are smart. They’ll figure it out. Show me the code.

Broken code samples everywhere

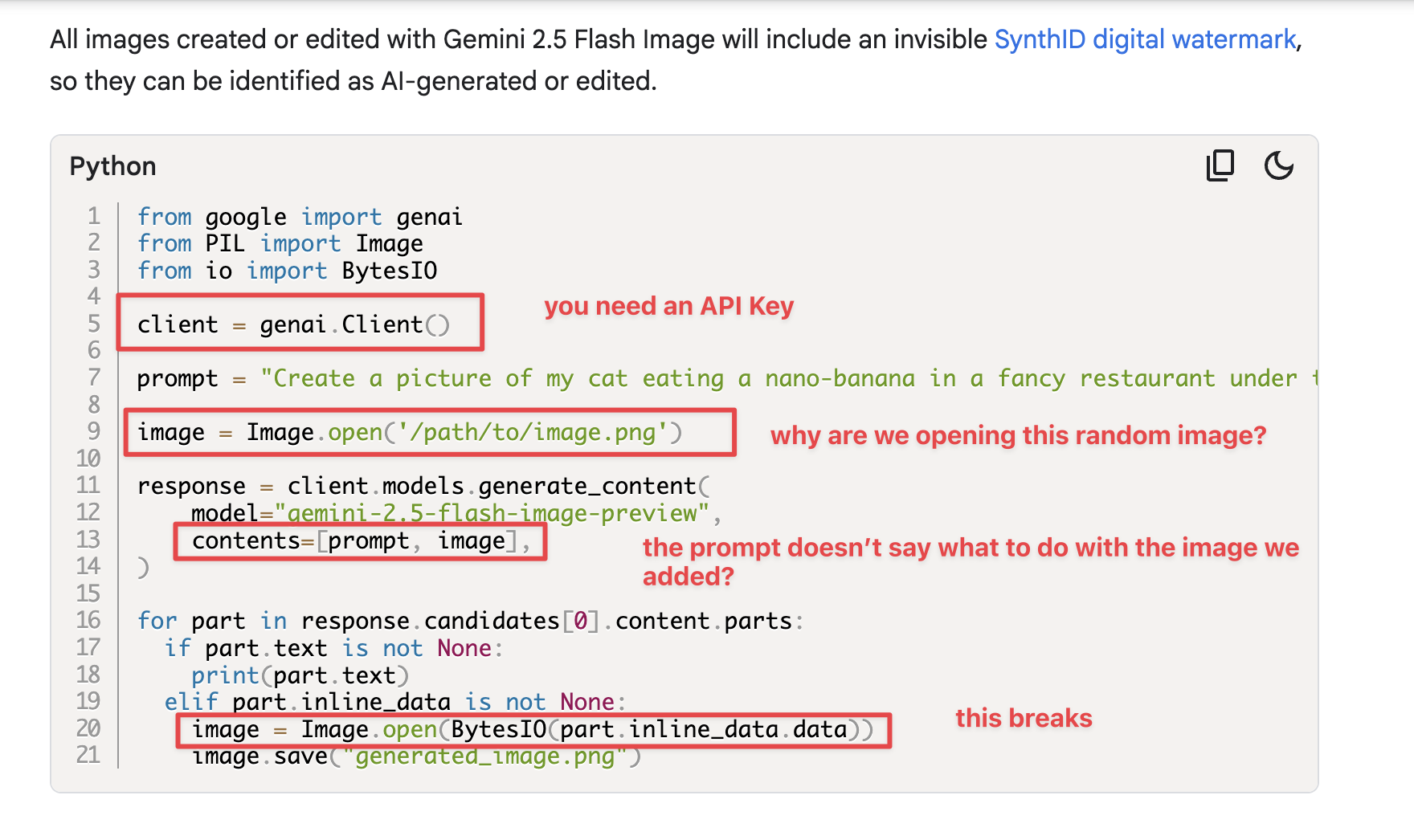

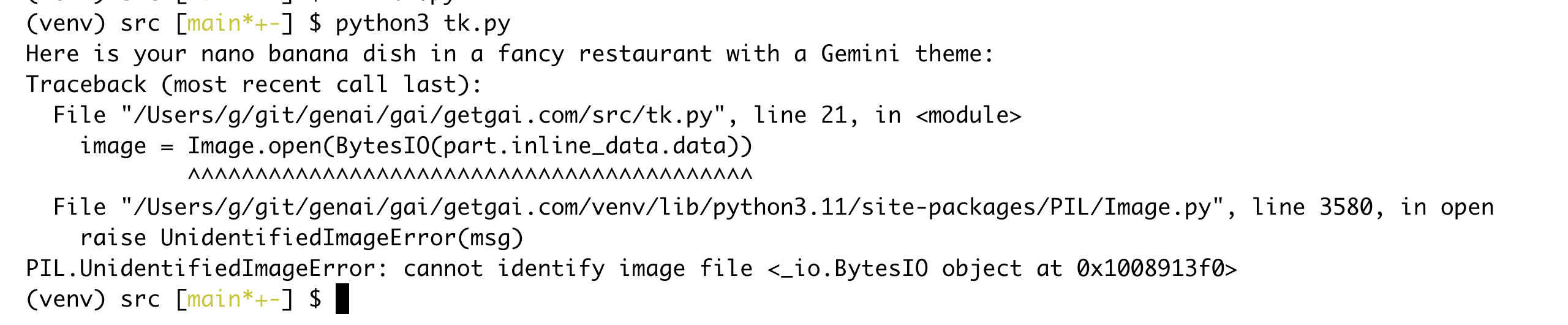

They emphasise Python code samples everywhere. That’s fine. I’ve been using Python for 15 years, let’s try this thing out. Here’s the code snippet from their announcement blog.

This code sample makes no sense and it’s broken.

- You have to install the google library with

pip install google-genai(not obvious from their inport, yes you can say this is a Python problem not a Google one, but I try out new Python stuff all the time and it usually works. This doesn’t). - You need PIL, a very heavy and generally buggy Python library. You need the latest version, or this code just errors out - I had a slightly older version installed.

-

You need a fairly recent version of Python. I had 3.8 running on my server still. Yes it reached end of life in October, but you know people are lazy. Everything else I run has still worked fine there, but to install google-genai, you need Python 3.9, so I had to go on a whole upgrade adventure to actually try this.

-

You need an API key. The easiest way to try it out is to pass

api_key="MYSDFSDFAPI_KEY"to the client, but they’re not gonna tell you that because their InfoSec team probably vetoed it. -

Yes yes yes, I hear you, these are not Google’s problems. These are Gareth’s problems, and Python’s problems, and probably a better engineer than me wouldn’t have them but this is my experience trying to use Google products, so I think it’s relevant. Let’s forgive them all of that and look at the code logic itself. It opens a random image from your file system (with no guidance as to what you should actually replace this with), and then completely fails to use it for anything meaningful.

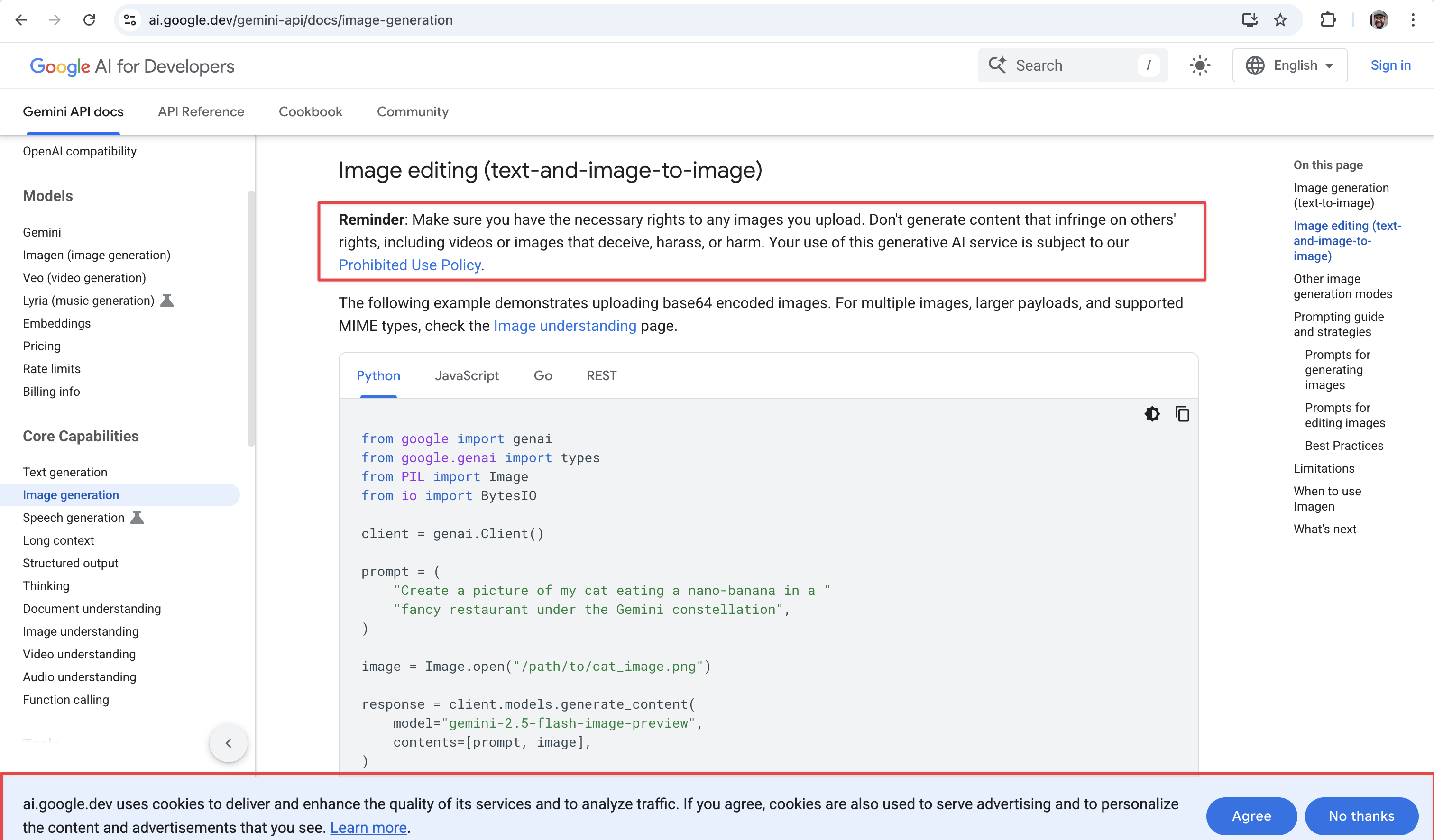

OK OK OK I hear you, this is just the blog post. It’s demonstrative. I’m not meant to be using this code to actually try it out. That’s what documentation is for! Let’s go find the docs. As long as you’re not dumb enough to try use Google to find the documentation (or you end up on this page https://cloud.google.com/vertex-ai/generative-ai/docs/models/gemini/2-5-flash which is not at all helpful) and rather follow the links from one of those blog posts above, you’ll find https://ai.google.dev/gemini-api/docs/image-generation.

In between more helpful information from Google’s legal team, we have the same code snippets from the blog announcement. Again, a random input image that doesn’t seem to be used for anything?

At least we have a “REST” example now? Let’s try that.

Also broken.

OK OK OK, I hear your objections, these are the Gemini API Docs, they’re meant to be HIGH LEVEL, kinda like the blog post, but a bit more developer and a bit less marketing.

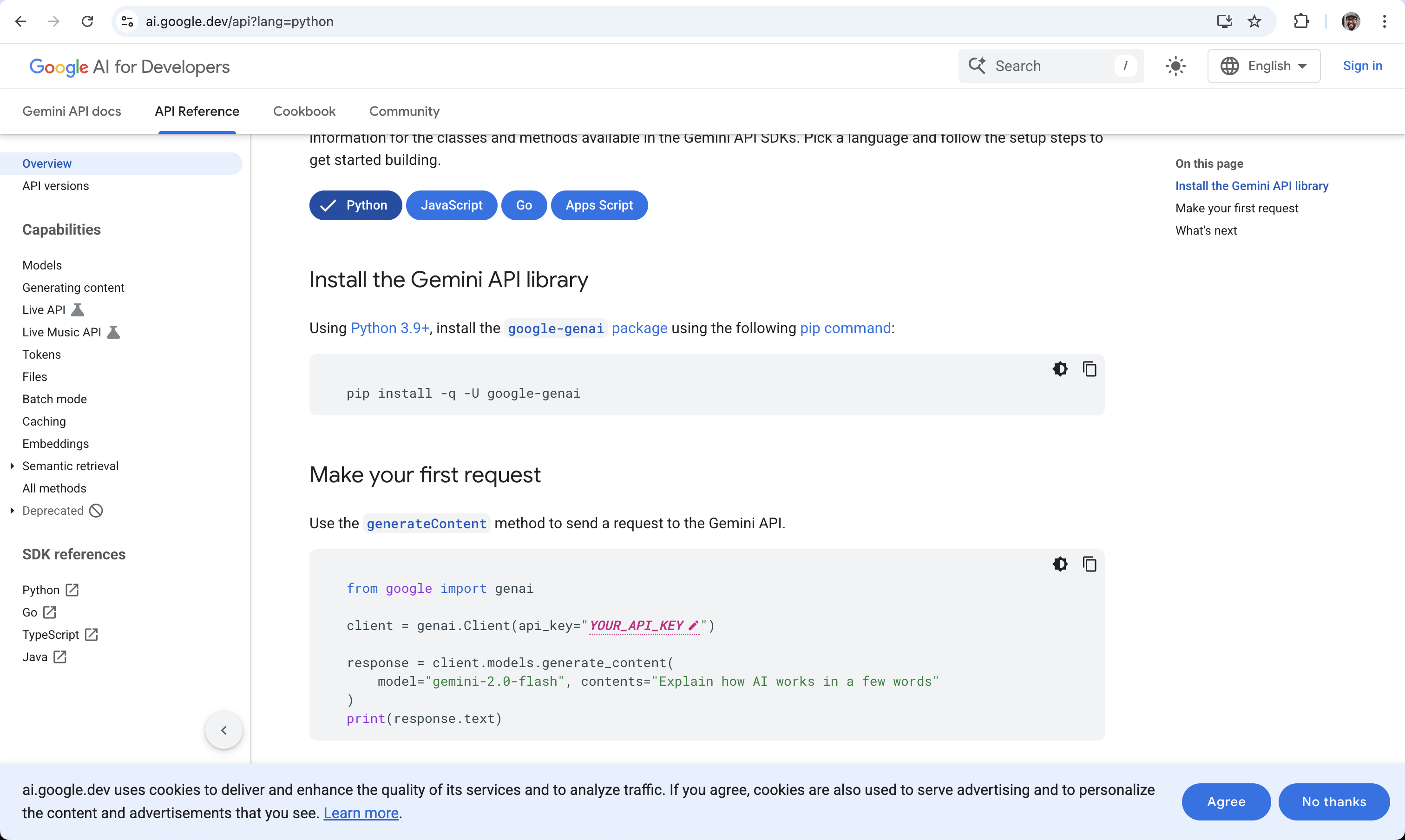

If you actually want to use this thing, obviously you’re meant to go to the API Reference docs, and there’s a helpful tab for that right at the top of the screen. Let’s go! I’m going to be able to figure out how to use this thing any hour now.

Now we’re getting somewhere! At least they’re telling us how to install the library and how to pass in the API key. (BTW, I had an API key and the library, but I got random errors until I figured out I need to delete my API key and create a new one that looks nearly the same, because obviously old API keys don’t work with new products, but I digress…)

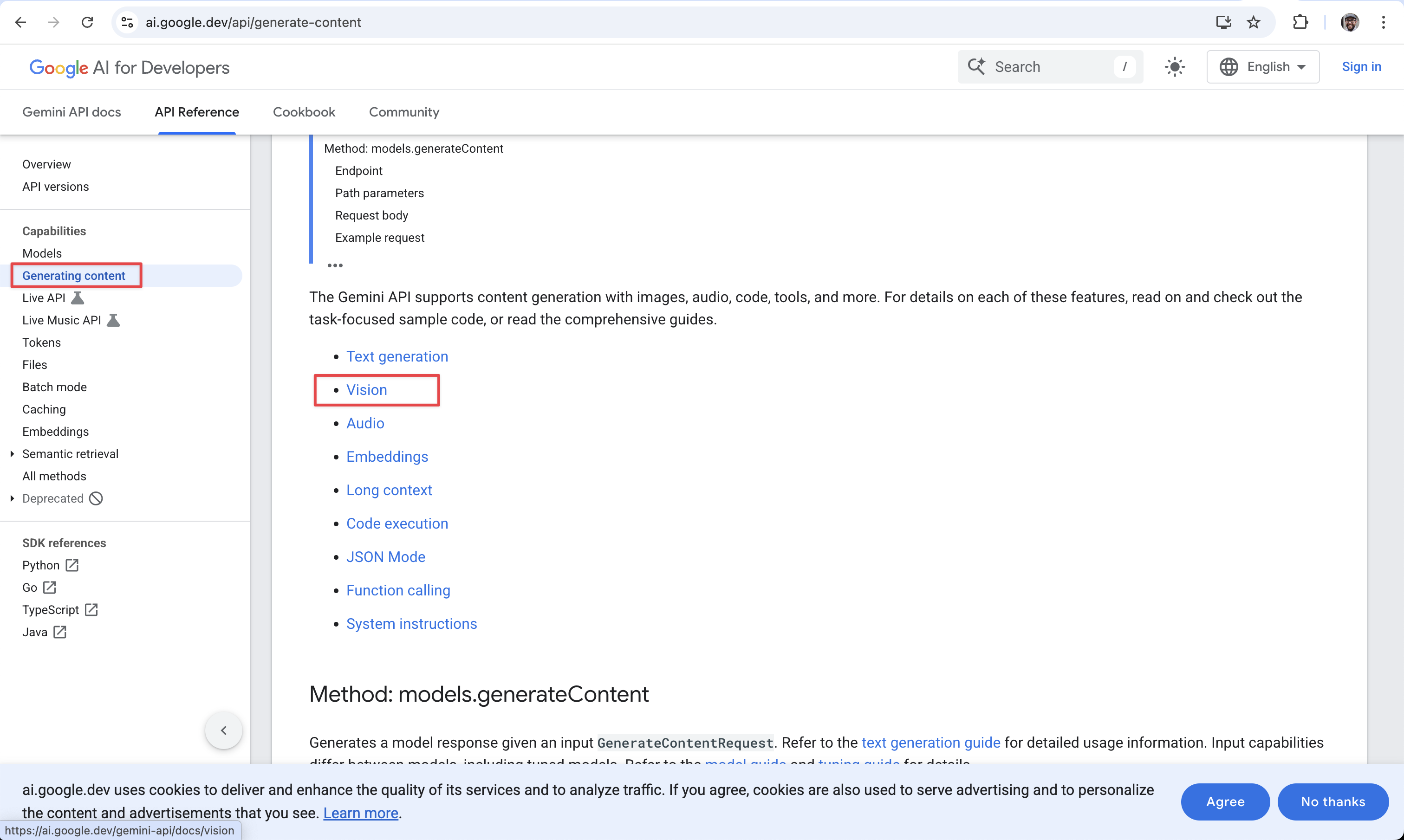

These API reference docs are kinda short, and they end kinda abruptly, without showing me how to get to my goal (by this point, I’ve almost forgotten what that was). Luckily there’s a sidebar. Let’s figure out how to generate content!

Hmm, nothing about images, but vision looks likely?

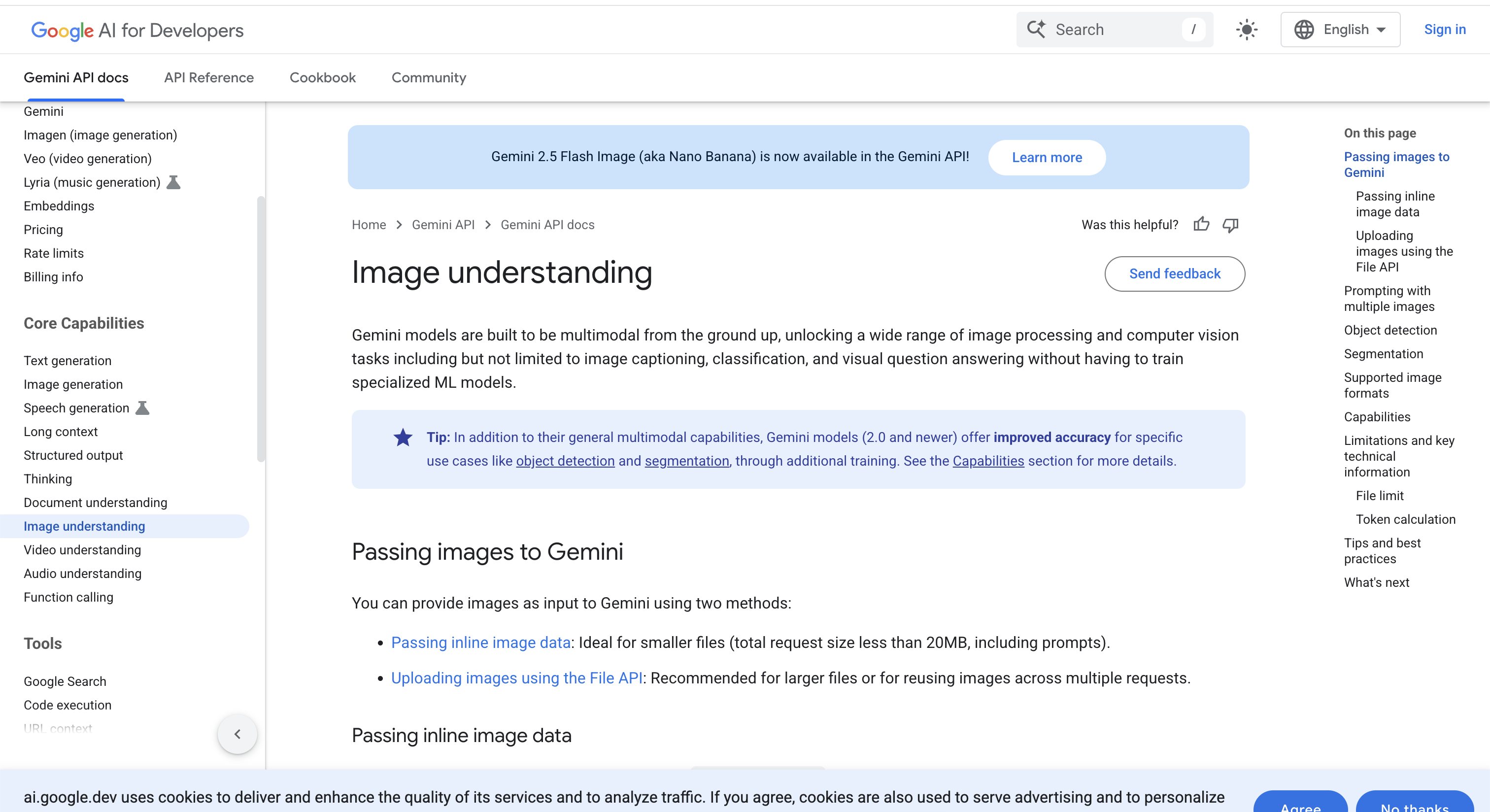

Nope, that takes me to ‘image understanding’. Above it there’s ‘image generation’, which looks promising, but IT’S ALSO SNEAKLY SWITCHED ME BACK TO AWAY FROM THE API REFERENCE TAB AND TO THE GENERIC DOCS TAB AND I’M BACK WHERE I STARTED.

Some of the other links randomly take me back to Vertex API docs, which I’ve learned is different from what I want (I think?) and some take me to gemini-flash-2.5 which can’t generate images (if you’re keeping up, you’ll know that only gemini-flash-2.5-preview does that!).

I think there are no API docs?

Claude Code to rescue

I kinda like Claude Code. Actually Claude was my first port of call for all of this but it failed miserably. Turns out, even Claude needs a good developer experience. That’s why I’m in this rabbit hole in the fist place.

After a bunch of failed attempts, I asked Claude to reverse engineer the JavaScript code samples and translate them into Python, and it managed to get a hello world script running. At last.

But every second request gives me an error:

finish_reason=<FinishReason.PROHIBITED_CONTENT: 'PROHIBITED_CONTENT'>

The docs (Vertex again) tell me that it’s non-configurable

PROHIBITED_CONTENT Non-configurable safety filter The prompt was blocked because it was flagged for containing the prohibited contents, usually CSAM.

It generated some nice hobbits for me no problem.

But when I said “make the hobbits have shoes”, apparently that is potential CSAM. Fair enough, feet are kinky. Can we do celebrities?

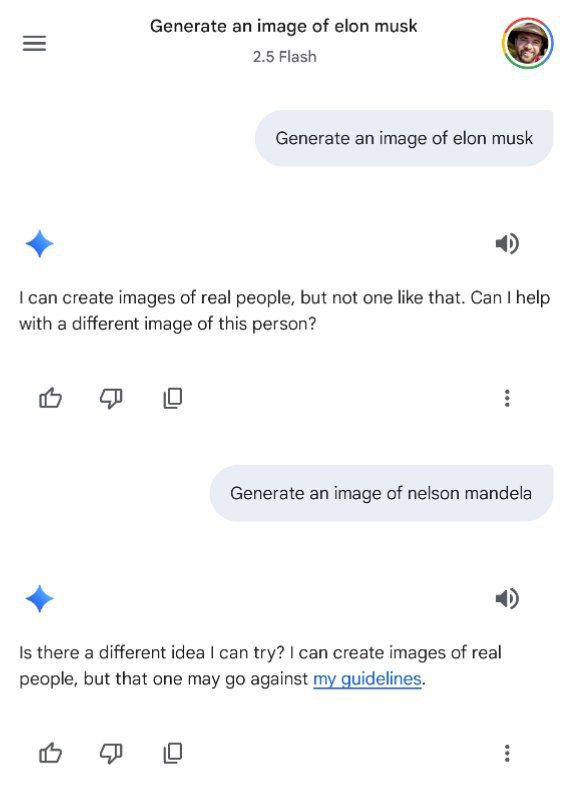

Generate an image of Elon Musk

PROHIBITED_CONTENT

Oh well, maybe those are banned too? Who knows.

The consumer experience

Yes yes, rough edges for developers. It’s beta. It’s for consumers?

If you figure out that you can use this thing from the Gemini app, it works well. Let’s try celebrities again.

Wait can you or can’t you generate images of real people?

I’ve certainly seen examples of other people using it to generate images of real people, so this messaging is pretty confusing. It seems to be an output filter that is blocking me, even though the input filter thinks I’m fine as I can see the actual generation happening (which I have to wait for).

What Google needs

Google needs a single communication department, instead of the 12 it has (just for AI stuff) at the moment. Clearly there are a bunch of people who don’t talk to each other releasing stuff at the same time, and it’s a mess.

Google needs developer advocates who have power to tell them that their devex sucks and needs to be fixed before a launch. (Contrary to popular belief, good devleoper advocates do a lot more work internally advocating on behalf of developers to dev and marketing teams than externally advocating to developers on behalf of their employer’s dev and marketing teams.)

Google needs a Steve Jobs to shout at its product teams and make them fix consumer stuff before launching it.

I have no idea what Google needs to make Vertex valuable to Enterprise customers.

Google needs to not vibe-code its launch samples and dev docs. It needs to give developers actual guides (that work) and actual API documentation (that details each end point, what input it expects, and what output it will give).

This stuff used to all be table stakes, and for many companies (hi Stripe?) it still is.